Your Help Center Is Rotting: How to Detect Stale Knowledge Before Your RAG Bot Starts Lying

RAG knowledge base freshness is not a nice-to-have. If your help center drifts out of date, your RAG bot can still sound confident while delivering answers that are no longer true. The failure mode is subtle: retrieval finds “relevant” text, generation paraphrases it smoothly, and users get outdated policy, old UI steps, or missing context. The fix is not one heroic cleanup sprint. You need a KB freshness system, meaning explicit expiry rules, accountable content owners, automated audits, and “do-not-answer if outdated” logic wired into retrieval and response.

Readiness Checklist TL;DR

- Every article has lastupdated, owner, and validityperiod

- Articles are tagged by layer (static core, frequent-update, on-demand live)

- New and updated articles are embedded immediately after publish

- Post-ingest smoke tests run against core support questions

- You monitor similarity scores or reranker confidence for low-score hits

- You track embedding drift against a baseline distribution

- Ranking uses a recency prior (half-life decay) as a tie-breaker

- Audits run weekly or after major product releases

- Expired articles auto-deprecate or archive, and trigger re-embedding

- A health dashboard includes relevance, faithfulness, and freshness

- The bot refuses to answer below a confidence-adjusted freshness threshold

Build a freshness pipeline

A freshness system starts with treating documentation like production data: timestamped, versioned, and monitored. Without that, “stale” is just a feeling, and your RAG accuracy will degrade quietly over time.

Stamp and expire everything

Add minimum metadata to every help article:

- Last updated timestamp

- Validity period (how long you trust it before review)

- Owner (a person or team accountable for updates)

The key is the validity period. It turns maintenance from “when someone remembers” into a measurable rule. When an article passes expiry, it becomes a known risk, not a hidden one.

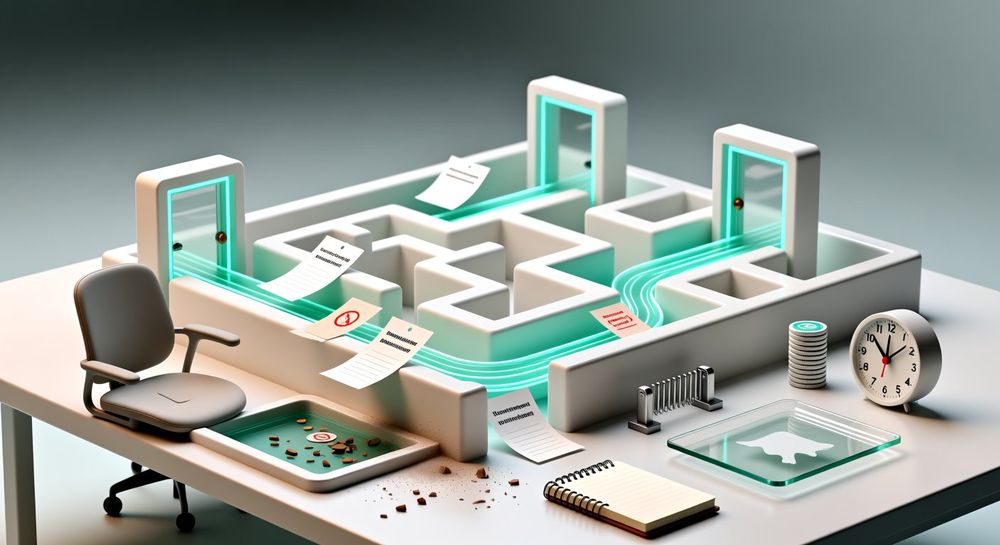

Use layered knowledge

A single blob of “the knowledge base” forces everything into the same update rhythm, which never fits reality. Use a layered architecture:

- Static core layer: concepts that rarely change, with longer SLAs and deliberate version control

- Frequent-update layer: product flows, pricing rules, UI steps, with short SLAs and tighter review cycles

- On-demand live layer: content that is best kept current through rapid updates, also version-controlled, but maintained with an expectation of change

Each layer needs its own expiry defaults and review cadence. This is how you scale help center maintenance without burning out editors.

Embed on publish, not later

For RAG systems, freshness is not only “the article is updated,” it is also “the index reflects it.” Embed new or updated articles as soon as they are published so retrieval can see the latest version immediately.

Treat embedding as part of your publishing pipeline, not a batch job someone runs when things feel off.

Detect staleness with retrieval signals

Even with expiry rules, you want early warning signs that the retrieval layer is struggling. Retrieval signals are often the first measurable symptom of stale documentation detection needs.

Smoke-test after every ingest

After each ingest (new embeddings or updates), run automated smoke-test queries against the index. These should be your core support questions, the ones you cannot afford to get wrong.

The goal is simple: verify that top-ranked chunks still answer the question. If retrieval starts surfacing irrelevant or outdated chunks for a core question, you have a freshness problem, even if no article has “expired” yet.

Keep smoke tests focused. You are not trying to cover every edge case, you are checking that the backbone of your support knowledge management still holds.

Watch similarity and reranker confidence

Monitor similarity-score distributions (or reranker confidence if you use re-ranking). Low-score hits are a common signature of missing or outdated context:

- The model “kind of” recognizes the topic, but nothing matches strongly

- Retrieval drifts toward older, generic explanations

- The system fills gaps during generation, which is where “lying” begins

You do not need perfect thresholds to start. You need alerts when the distribution shifts, and a workflow for what happens next (triage, update, deprecate, or add new content).

Add embedding drift detection

Embedding drift is another early signal. Track when the statistical properties of newly generated vectors diverge from the baseline distribution.

Drift can mean multiple things in practice:

- New content differs structurally from older content

- The representation changes enough that retrieval behavior shifts

- Your index starts behaving differently for the same questions

You are not using drift to “prove” content is wrong. You are using it to flag that the system’s retrieval assumptions may be changing, which raises the risk of stale or missing answers.

Prefer new, but verify

Freshness should influence ranking, but it must not bulldoze relevance. The system should prefer newer documents only when they are reasonably relevant, and avoid old content unless it has a much stronger semantic match.

Apply a recency prior

Use a simple recency prior during ranking, such as a half-life decay factor. Conceptually:

- Newer documents get a small boost

- Older documents slowly decay in rank

- A much higher semantic match can still win

This aligns ranking with reality: policies and UI steps age, but foundational concepts can remain accurate longer. The half-life idea lets you tune that behavior without hard-cutting everything older than a certain date.

Version control and re-embedding rules

Layered knowledge works best when each layer is version-controlled and has explicit re-embedding triggers.

Use clear triggers such as:

- Article updated or replaced

- Article deprecated or archived

- Major product release (forces review of frequent-update layer)

When a document changes, you want a predictable response: update metadata, deprecate the old version, and re-embed the new one.

Enforce regular audits

Run audits weekly or after major product releases. Audits should surface:

- Articles past expiry

- Articles that are deprecated but still retrievable

- Articles whose top-ranked chunks fail smoke tests

Then enforce the outcome. If an article is past expiry and unreviewed, automatically deprecate or archive it, and trigger re-embedding so retrieval stops leaning on it.

This is the difference between “we have an audit checklist” and an actual freshness system that protects RAG accuracy.

Add “do-not-answer” gates

Even with strong maintenance, you need runtime protection. The bot should not answer when it is likely to be wrong. This is where you stop a stale help center from turning into confident fabrication.

Use confidence-adjusted freshness

Configure the bot to refuse to answer or fall back to “I don’t know” when a confidence-adjusted freshness score drops below a safe threshold. This score should combine:

- Retrieval confidence (similarity or reranker confidence)

- Freshness signals (recency prior, expiry status, layer SLA)

- Evaluation signals (from your RAG health dashboard)

The exact formula matters less than the principle: stale plus uncertain equals no answer.

Define go/no-go gates

Make the decision to answer explicit. Example go/no-go gates you can implement:

Go (answer) when:

- Top chunks are above your confidence threshold

- Retrieved docs are not expired, or are within the layer’s SLA

- Freshness-adjusted confidence clears your safe threshold

No-go (refuse or fallback) when:

- Confidence is low and retrieved docs are old or expired

- Smoke tests for that intent have been failing recently

- The system detects drift or score-distribution anomalies tied to that topic

No-go should not be treated as failure. It is the system doing its job: preventing outdated or fabricated answers from reaching users.

Escalate with full context

When you do handoff to a human or a ticket, pass full context so your team can resolve the doc gap quickly:

- User question (verbatim)

- Detected intent

- Retrieved sources and timestamps

- Similarity or reranker confidence signals

- Steps tried (what the bot searched, and why it refused)

- Suggested doc to update (if the system can infer an owner or layer)

This turns “the bot didn’t answer” into actionable support knowledge management work. It also helps you decide whether the right fix is an article update, a new article, a deprecation, or a change to chunking and retrieval.

Monitor with RAG evaluation dashboards

Integrate RAG evaluation frameworks such as RAGAS or ARES into a health dashboard. Track:

- Answer relevance

- Faithfulness

- Freshness metrics

Freshness belongs next to relevance and faithfulness, not as a separate documentation chore. When these are visualized together, your team can see patterns like “answers are relevant but not fresh,” which is a clear signal that help center maintenance is falling behind.

Conclusion

A rotting help center is not just a content problem, it is a systems problem. Your RAG bot will retrieve something, and if the best available text is outdated, the output will be outdated too, often without obvious warning. Fix this with a KB freshness pipeline: timestamp and expire every document, assign owners, embed updates immediately, smoke-test retrieval after ingest, watch similarity and reranker confidence, and add embedding drift detection. Then enforce layered SLAs, weekly or post-release audits, and a hard “do-not-answer if outdated” gate tied to confidence-adjusted freshness. Tools like SimpleChat.bot make this easy by letting you keep a knowledge base grounded, monitored, and ready for safer RAG responses.